Agentic AI in Treasury Management: From Automation to Action

Most CFOs and treasury teams have now sat through plenty of AI vendor demos. They've seen the dashboards, heard the pitch about productivity gains, and walked away asking the same question: "What does this actually do for me, in my environment, with my messy data?"

That skepticism is rational. Treasury is one of the most consequence-heavy functions in any enterprise. A bad call isn't a missed quarterly target. It's a liquidity event, a covenant breach, or a regulatory issue. The culture is conservative by design, and that conservatism is a feature, not a flaw.

So before examining what's possible with AI treasury management, it's worth being honest about where the industry actually stands today.

Where Treasury AI Adoption Really Stands

Citi's GenAI in Treasury practitioners report offers the clearest current picture. According to the report, 82% of organizations are at an early exploration stage, and only 3% have reached anything resembling organizational-scale value. That figure isn't a verdict on the technology. It's a reflection of how much foundational work most treasury teams haven't completed yet.

The gap between identifying AI opportunities and actually mobilizing them is wider in finance than in almost any other enterprise function. Less than 50% of identified AI opportunities in treasury have been validated for proof of concept, according to Citi's Treasury Diagnostics research. The reasons cited most frequently: limited internal resources, data quality challenges, and uncertainty about which tools are actually fit for a regulated financial environment.

Understanding that gap is the starting point for any serious AI treasury management strategy.

The AI Spectrum: Not All "AI" Is the Same

One reason treasury AI adoption is lagging is that the term "AI" covers a wide range of capabilities, and vendors rarely distinguish between them. That ambiguity makes it difficult for finance leaders to evaluate what they're actually buying.

Here's a practical breakdown of what's actually on offer:

- Rules-based automation: These are deterministic, trigger-driven workflows. While fast, predictable, and useful, this is not true AI.

- Advanced analytics: This category includes enhanced dashboards and accelerated data visualization. While valuable, these capabilities do not represent true AI.

- Generative AI (GenAI): Generative AI synthesizes information, creates narratives, and responds to natural language queries. This technology is what most vendors refer to as "AI-powered." It is reactive and requires a prompt to function.

- Agentic AI: This AI continuously monitors data, detects significant conditions, interprets their meaning, and initiates predefined actions within set boundaries. It functions proactively without requiring a prompt.

The difference between GenAI and agentic AI is the difference between a tool that answers questions and a system that identifies the questions worth asking. That distinction matters enormously in treasury, where the highest-value decisions depend on catching a signal before it becomes a problem.

The Problem Agentic AI Is Built to Solve

Ask any treasury team what consumes most of their analytical bandwidth, and you'll hear variations of the same answer: variance analysis, forecast commentary, policy compliance monitoring, and exception reporting. These tasks are high-skill, high-volume, and time-consuming. They require experienced people who should be focused on strategic decisions.

The pattern described by treasury professionals across industries is consistent. A variance surfaces in the 13-week forecast. Someone spends half a day tracing it through business unit submissions, customer payment patterns, and seasonal history. A policy deviation appears in the risk report. Someone manually cross-references exposure data, hedge ratios, and market rates to understand the implications.

This is the "Excel trap" that persists in even sophisticated treasury functions. It's not that teams lack capability. It's that the tools they rely on require them to assemble context manually before they can act on it.

Agentic AI addresses this by operating across four functional layers:

- Monitor: Always-on detection that surfaces critical events without manual intervention. This includes an FX threshold breached at midnight, an emerging cash shortfall, or an overdue forecast submission from a business unit.

- Interpret: Analysis that moves beyond identifying an event to explaining its cause and future implications. It provides root cause analysis, distinguishing significant signals from noise.

- Act: Execution of a defined next step within configured guardrails, such as automatically chasing a missing submission, generating a consolidated report, or routing an escalation with all context pre-assembled.

- Learn: Refine over time based on team feedback, annotations, and evolving business context.

Most of the market is operating at layer one, or early layer two. The frontier is layer three, executed with the governance that treasury requires.

The Data Problem Nobody Wants to Lead With

Here's the uncomfortable reality that precedes any serious AI treasury management deployment: AI cannot outperform the data it runs on. And in most treasury environments, that data is fragmented, inconsistently structured, and partially manual.

The typical enterprise treasury team is pulling cash data from multiple ERP systems, SAP, Oracle, sometimes both in different regional instances. Bank statements arrive in different formats from 10, 20, or 40-plus institutions. FX data comes from multiple sources. Intercompany positions are reconciled manually every week.

Gartner's research on enterprise AI adoption consistently shows finance and treasury trailing other functions, including IT, supply chain, and marketing. The reasons are structural: strict data governance requirements, regulatory scrutiny on anything that touches a financial statement, and board-level accountability for treasury outcomes.

Citi's research is direct on this point: data quality and accessibility are the primary barriers to AI success in treasury, not model sophistication, not compute cost, not change management. Data comes first.

How GSmart Embeds Agentic AI Into Treasury Workflows

Ripple Treasury's GSmart platform was designed around the questions treasury leaders actually ask when evaluating new technology: Is my data safe? Can I explain this to my auditor? Will it work with how we actually operate?

The GSmart suite operates across four AI functions: discover, infer, reason, and decide. Each module applies the right type of intelligence to the right treasury problem, rather than forcing a single model onto every workflow.

GSmart Forecast Insights

Forecast Insights is an AI agent embedded directly in the cash forecasting workflow. When a variance surfaces, the agent automatically identifies the top drivers, explains what's behind them, determines whether the pattern is temporary or structural, and generates board-ready narrative commentary.

The key distinction: the analysis is available in seconds, not after half a day of manual data assembly. The system also learns from team annotations over time, building domain-specific institutional memory that improves interpretations with each cycle.

Underpinning forecast accuracy is GSmart Ledger, a statistical modeling layer that analyzes accounts payable and receivable data to predict future cash flow trends. In production with clients, GSmart Ledger has delivered over 30% improvement in forecast accuracy when the underlying data foundation is clean.

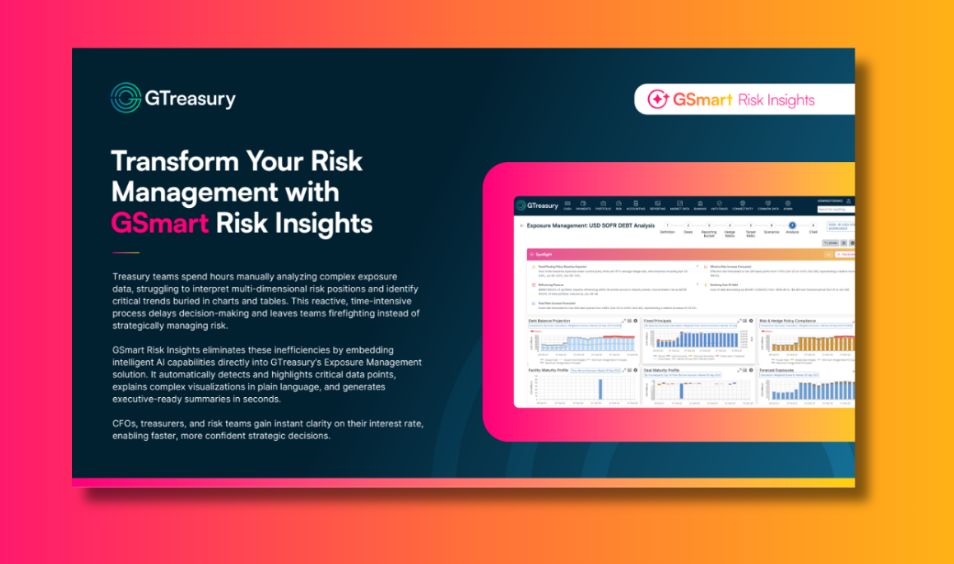

GSmart Risk Insights

The same agentic architecture applied to cash forecasting extends to exposure management through Risk Insights, which surfaces approaching maturities, policy violations, significant rate changes, and hedge ratio deviations. Each item links to a full contextual analysis covering what's driving it, what historical patterns look like, what the risk implications are, and what options exist.

The operational shift is significant. Policy breaches that previously got caught after the fact, because monitoring was periodic rather than continuous, get surfaced in real time. Risk Insights automatically translates complex exposure data into plain-language summaries, making it faster to present a confident position to a board or risk committee.

GSmart Hub: The Next Evolution

Forecast Insights and Risk Insights give treasury teams AI where they already work. The next step is giving them the ability to orchestrate, govern, and scale that intelligence across everything they do.

The GSmart Hub is designed to do exactly that. Pre-built agents available in a catalog, event-driven triggers that act when a threshold is breached rather than when a schedule says to check, and a full Operations Center with real-time execution logs and a compliance-ready audit trail. For treasury teams who want to move from individual AI workflows to a genuine operating layer for the CFO office, the Hub is where that becomes possible.

Governance, Security, and the Regulatory Reality

Any CFO evaluating AI treasury management tools needs direct answers to direct questions. Where does the data go during processing? How does this perform under the EU AI Act? What does the audit trail look like?

These aren't hypothetical concerns for organizations operating in regulated markets.

Data residency: GSmart operates within regional AI inference environments. For North American, APAC, and EMEA clients, data never leaves the region at any point in the AI workflow. Not for storage, not for inference, not for processing. Complete tenant isolation is enforced at the application, storage, and AI indexing layers.

Security architecture: Every interaction uses zero-trust authentication. Azure Managed Identity eliminates hardcoded credentials. Role-based access controls operate at every component layer. Data never trains the models. Inference-only operation is verified by audit log, not just contractual assertion. Adversarial red team testing probes AI-specific failure modes, including hallucination under edge case inputs and guardrail bypass attempts.

Regulatory alignment: GSmart use cases are deliberately designed as limited-risk applications under the EU AI Act. Human oversight is present in every workflow. No material financial transaction executes autonomously. Full explainability and complete audit trails are standard.

One distinction worth clarifying: GDPR compliance is not AI governance. GDPR governs data privacy. It doesn't address model explainability, agentic oversight, or AI risk classification. Both frameworks matter. Any vendor that conflates them is worth pressing.

On SOX and PCI compliance specifically: the human-in-the-loop architecture isn't a concession to regulatory caution. It's a design principle. One client came to Ripple Treasury with a precise requirement for using AI agents to prepare proposed payments based on cash positions, and was explicit that a human must review and process all payments. That's the right instinct. A mandatory gate on a high-stakes action is how agentic AI earns trust in treasury, not by removing human judgment, but by making it faster and better informed.

What's Deployable Now vs. What's Still Ahead

Cutting through vendor claims requires a clear-eyed view of what's actually in production versus what's still on the roadmap.

Available now:

- Forecast variance analysis that identifies drivers and generates executive narrative, reducing hours-long manual processes to minutes

- Signal-based monitoring for risk exposure, liquidity thresholds, and policy compliance

- 30%-plus improvement in forecast accuracy when data foundations are clean (in production with clients)

- Conversational access to treasury data for finance team members who couldn't previously run a TMS query

- Onboarding automation that cuts multi-ERP implementation timelines by more than 50%

Coming next:

- Proposed payment preparation based on cash positions, with mandatory human review

- Counterparty risk monitoring agents that replicate and extend internal alert frameworks inside the TMS

- Scenario analysis and sensitivity modeling driven by market condition signals

Not production-ready:

- Fully autonomous execution of material financial decisions. Any vendor positioning autonomous payment execution or trade authorization as production-ready should be asked directly about their governance architecture. The answer will tell you what you need to know.

The Single Source of Truth, Delivered Before You Think to Ask

The CFO office has always needed a single source of truth across cash positions, exposure, forecasts, and risk. What agentic AI makes possible is a version of that source of truth that doesn't just wait to be queried.

A dashboard tells you what happened. A copilot answers your questions. An agent tells you what matters before you think to ask, and takes a next step within boundaries you've set.

That's the direction treasury technology is heading. The organizations building toward it now, with clean data foundations, governed AI architectures, and human-in-the-loop design, will be positioned to operate with a level of speed and confidence that manual processes can't match.

The question for CFOs evaluating AI treasury management in 2025 isn't whether agentic AI will reshape the function. It's whether your current infrastructure is ready to support it, and whether the vendors you're evaluating can answer the governance questions your board will eventually ask.

Schedule a meeting with Ripple Treasury to see GSmart in action.

Frequently Asked Questions: AI Treasury Management

What is agentic AI in treasury management? Agentic AI in treasury management refers to AI systems that monitor treasury data continuously, detect conditions that require attention, interpret their significance, and initiate a defined next step, all within governance parameters set by the treasury team. Unlike generative AI, which waits to be queried, agentic AI proactively identifies what matters before a human has to go looking.

How is AI treasury management different from traditional automation? Traditional automation is rules-based and schedule-driven: it runs a process at a set time regardless of conditions. AI treasury management is event-driven and context-aware. It detects when something meaningful has changed, explains why, and takes or recommends a next step informed by the full data context.

Is AI treasury management secure for regulated enterprises? Security depends entirely on the vendor's architecture. The critical questions are: Where does data go during AI processing? Is inference performed within your regional infrastructure? Does your data train the model? What is the audit trail for AI-generated outputs? Platforms like Ripple Treasury's GSmart are built with regional data residency, zero-trust authentication, and inference-only operation verified by audit log.

What does the EU AI Act mean for AI treasury management tools? The EU AI Act classifies AI systems by risk level. Well-designed treasury AI tools are intentionally architected as limited-risk applications: with human oversight in every workflow, no autonomous execution of material financial decisions, full explainability, and complete audit trails. GDPR compliance alone does not satisfy AI Act requirements; the two frameworks address different concerns.

What's the biggest barrier to AI adoption in treasury? According to Citi's practitioner research, data quality and accessibility (not model sophistication or compute cost) are the primary barriers to AI success in treasury. AI cannot outperform the data it runs on. The prerequisite for effective AI treasury management is a unified, validated, governed data layer across ERP systems, bank connectivity, and risk data sources.

Agentic AI in Treasury Management: From Automation to Action

Most CFOs and treasury teams have now sat through plenty of AI vendor demos. They've seen the dashboards, heard the pitch about productivity gains, and walked away asking the same question: "What does this actually do for me, in my environment, with my messy data?"

That skepticism is rational. Treasury is one of the most consequence-heavy functions in any enterprise. A bad call isn't a missed quarterly target. It's a liquidity event, a covenant breach, or a regulatory issue. The culture is conservative by design, and that conservatism is a feature, not a flaw.

So before examining what's possible with AI treasury management, it's worth being honest about where the industry actually stands today.

Where Treasury AI Adoption Really Stands

Citi's GenAI in Treasury practitioners report offers the clearest current picture. According to the report, 82% of organizations are at an early exploration stage, and only 3% have reached anything resembling organizational-scale value. That figure isn't a verdict on the technology. It's a reflection of how much foundational work most treasury teams haven't completed yet.

The gap between identifying AI opportunities and actually mobilizing them is wider in finance than in almost any other enterprise function. Less than 50% of identified AI opportunities in treasury have been validated for proof of concept, according to Citi's Treasury Diagnostics research. The reasons cited most frequently: limited internal resources, data quality challenges, and uncertainty about which tools are actually fit for a regulated financial environment.

Understanding that gap is the starting point for any serious AI treasury management strategy.

The AI Spectrum: Not All "AI" Is the Same

One reason treasury AI adoption is lagging is that the term "AI" covers a wide range of capabilities, and vendors rarely distinguish between them. That ambiguity makes it difficult for finance leaders to evaluate what they're actually buying.

Here's a practical breakdown of what's actually on offer:

- Rules-based automation: These are deterministic, trigger-driven workflows. While fast, predictable, and useful, this is not true AI.

- Advanced analytics: This category includes enhanced dashboards and accelerated data visualization. While valuable, these capabilities do not represent true AI.

- Generative AI (GenAI): Generative AI synthesizes information, creates narratives, and responds to natural language queries. This technology is what most vendors refer to as "AI-powered." It is reactive and requires a prompt to function.

- Agentic AI: This AI continuously monitors data, detects significant conditions, interprets their meaning, and initiates predefined actions within set boundaries. It functions proactively without requiring a prompt.

The difference between GenAI and agentic AI is the difference between a tool that answers questions and a system that identifies the questions worth asking. That distinction matters enormously in treasury, where the highest-value decisions depend on catching a signal before it becomes a problem.

The Problem Agentic AI Is Built to Solve

Ask any treasury team what consumes most of their analytical bandwidth, and you'll hear variations of the same answer: variance analysis, forecast commentary, policy compliance monitoring, and exception reporting. These tasks are high-skill, high-volume, and time-consuming. They require experienced people who should be focused on strategic decisions.

The pattern described by treasury professionals across industries is consistent. A variance surfaces in the 13-week forecast. Someone spends half a day tracing it through business unit submissions, customer payment patterns, and seasonal history. A policy deviation appears in the risk report. Someone manually cross-references exposure data, hedge ratios, and market rates to understand the implications.

This is the "Excel trap" that persists in even sophisticated treasury functions. It's not that teams lack capability. It's that the tools they rely on require them to assemble context manually before they can act on it.

Agentic AI addresses this by operating across four functional layers:

- Monitor: Always-on detection that surfaces critical events without manual intervention. This includes an FX threshold breached at midnight, an emerging cash shortfall, or an overdue forecast submission from a business unit.

- Interpret: Analysis that moves beyond identifying an event to explaining its cause and future implications. It provides root cause analysis, distinguishing significant signals from noise.

- Act: Execution of a defined next step within configured guardrails, such as automatically chasing a missing submission, generating a consolidated report, or routing an escalation with all context pre-assembled.

- Learn: Refine over time based on team feedback, annotations, and evolving business context.

Most of the market is operating at layer one, or early layer two. The frontier is layer three, executed with the governance that treasury requires.

The Data Problem Nobody Wants to Lead With

Here's the uncomfortable reality that precedes any serious AI treasury management deployment: AI cannot outperform the data it runs on. And in most treasury environments, that data is fragmented, inconsistently structured, and partially manual.

The typical enterprise treasury team is pulling cash data from multiple ERP systems, SAP, Oracle, sometimes both in different regional instances. Bank statements arrive in different formats from 10, 20, or 40-plus institutions. FX data comes from multiple sources. Intercompany positions are reconciled manually every week.

Gartner's research on enterprise AI adoption consistently shows finance and treasury trailing other functions, including IT, supply chain, and marketing. The reasons are structural: strict data governance requirements, regulatory scrutiny on anything that touches a financial statement, and board-level accountability for treasury outcomes.

Citi's research is direct on this point: data quality and accessibility are the primary barriers to AI success in treasury, not model sophistication, not compute cost, not change management. Data comes first.

How GSmart Embeds Agentic AI Into Treasury Workflows

Ripple Treasury's GSmart platform was designed around the questions treasury leaders actually ask when evaluating new technology: Is my data safe? Can I explain this to my auditor? Will it work with how we actually operate?

The GSmart suite operates across four AI functions: discover, infer, reason, and decide. Each module applies the right type of intelligence to the right treasury problem, rather than forcing a single model onto every workflow.

GSmart Forecast Insights

Forecast Insights is an AI agent embedded directly in the cash forecasting workflow. When a variance surfaces, the agent automatically identifies the top drivers, explains what's behind them, determines whether the pattern is temporary or structural, and generates board-ready narrative commentary.

The key distinction: the analysis is available in seconds, not after half a day of manual data assembly. The system also learns from team annotations over time, building domain-specific institutional memory that improves interpretations with each cycle.

Underpinning forecast accuracy is GSmart Ledger, a statistical modeling layer that analyzes accounts payable and receivable data to predict future cash flow trends. In production with clients, GSmart Ledger has delivered over 30% improvement in forecast accuracy when the underlying data foundation is clean.

GSmart Risk Insights

The same agentic architecture applied to cash forecasting extends to exposure management through Risk Insights, which surfaces approaching maturities, policy violations, significant rate changes, and hedge ratio deviations. Each item links to a full contextual analysis covering what's driving it, what historical patterns look like, what the risk implications are, and what options exist.

The operational shift is significant. Policy breaches that previously got caught after the fact, because monitoring was periodic rather than continuous, get surfaced in real time. Risk Insights automatically translates complex exposure data into plain-language summaries, making it faster to present a confident position to a board or risk committee.

GSmart Hub: The Next Evolution

Forecast Insights and Risk Insights give treasury teams AI where they already work. The next step is giving them the ability to orchestrate, govern, and scale that intelligence across everything they do.

The GSmart Hub is designed to do exactly that. Pre-built agents available in a catalog, event-driven triggers that act when a threshold is breached rather than when a schedule says to check, and a full Operations Center with real-time execution logs and a compliance-ready audit trail. For treasury teams who want to move from individual AI workflows to a genuine operating layer for the CFO office, the Hub is where that becomes possible.

Governance, Security, and the Regulatory Reality

Any CFO evaluating AI treasury management tools needs direct answers to direct questions. Where does the data go during processing? How does this perform under the EU AI Act? What does the audit trail look like?

These aren't hypothetical concerns for organizations operating in regulated markets.

Data residency: GSmart operates within regional AI inference environments. For North American, APAC, and EMEA clients, data never leaves the region at any point in the AI workflow. Not for storage, not for inference, not for processing. Complete tenant isolation is enforced at the application, storage, and AI indexing layers.

Security architecture: Every interaction uses zero-trust authentication. Azure Managed Identity eliminates hardcoded credentials. Role-based access controls operate at every component layer. Data never trains the models. Inference-only operation is verified by audit log, not just contractual assertion. Adversarial red team testing probes AI-specific failure modes, including hallucination under edge case inputs and guardrail bypass attempts.

Regulatory alignment: GSmart use cases are deliberately designed as limited-risk applications under the EU AI Act. Human oversight is present in every workflow. No material financial transaction executes autonomously. Full explainability and complete audit trails are standard.

One distinction worth clarifying: GDPR compliance is not AI governance. GDPR governs data privacy. It doesn't address model explainability, agentic oversight, or AI risk classification. Both frameworks matter. Any vendor that conflates them is worth pressing.

On SOX and PCI compliance specifically: the human-in-the-loop architecture isn't a concession to regulatory caution. It's a design principle. One client came to Ripple Treasury with a precise requirement for using AI agents to prepare proposed payments based on cash positions, and was explicit that a human must review and process all payments. That's the right instinct. A mandatory gate on a high-stakes action is how agentic AI earns trust in treasury, not by removing human judgment, but by making it faster and better informed.

What's Deployable Now vs. What's Still Ahead

Cutting through vendor claims requires a clear-eyed view of what's actually in production versus what's still on the roadmap.

Available now:

- Forecast variance analysis that identifies drivers and generates executive narrative, reducing hours-long manual processes to minutes

- Signal-based monitoring for risk exposure, liquidity thresholds, and policy compliance

- 30%-plus improvement in forecast accuracy when data foundations are clean (in production with clients)

- Conversational access to treasury data for finance team members who couldn't previously run a TMS query

- Onboarding automation that cuts multi-ERP implementation timelines by more than 50%

Coming next:

- Proposed payment preparation based on cash positions, with mandatory human review

- Counterparty risk monitoring agents that replicate and extend internal alert frameworks inside the TMS

- Scenario analysis and sensitivity modeling driven by market condition signals

Not production-ready:

- Fully autonomous execution of material financial decisions. Any vendor positioning autonomous payment execution or trade authorization as production-ready should be asked directly about their governance architecture. The answer will tell you what you need to know.

The Single Source of Truth, Delivered Before You Think to Ask

The CFO office has always needed a single source of truth across cash positions, exposure, forecasts, and risk. What agentic AI makes possible is a version of that source of truth that doesn't just wait to be queried.

A dashboard tells you what happened. A copilot answers your questions. An agent tells you what matters before you think to ask, and takes a next step within boundaries you've set.

That's the direction treasury technology is heading. The organizations building toward it now, with clean data foundations, governed AI architectures, and human-in-the-loop design, will be positioned to operate with a level of speed and confidence that manual processes can't match.

The question for CFOs evaluating AI treasury management in 2025 isn't whether agentic AI will reshape the function. It's whether your current infrastructure is ready to support it, and whether the vendors you're evaluating can answer the governance questions your board will eventually ask.

Schedule a meeting with Ripple Treasury to see GSmart in action.

Frequently Asked Questions: AI Treasury Management

What is agentic AI in treasury management? Agentic AI in treasury management refers to AI systems that monitor treasury data continuously, detect conditions that require attention, interpret their significance, and initiate a defined next step, all within governance parameters set by the treasury team. Unlike generative AI, which waits to be queried, agentic AI proactively identifies what matters before a human has to go looking.

How is AI treasury management different from traditional automation? Traditional automation is rules-based and schedule-driven: it runs a process at a set time regardless of conditions. AI treasury management is event-driven and context-aware. It detects when something meaningful has changed, explains why, and takes or recommends a next step informed by the full data context.

Is AI treasury management secure for regulated enterprises? Security depends entirely on the vendor's architecture. The critical questions are: Where does data go during AI processing? Is inference performed within your regional infrastructure? Does your data train the model? What is the audit trail for AI-generated outputs? Platforms like Ripple Treasury's GSmart are built with regional data residency, zero-trust authentication, and inference-only operation verified by audit log.

What does the EU AI Act mean for AI treasury management tools? The EU AI Act classifies AI systems by risk level. Well-designed treasury AI tools are intentionally architected as limited-risk applications: with human oversight in every workflow, no autonomous execution of material financial decisions, full explainability, and complete audit trails. GDPR compliance alone does not satisfy AI Act requirements; the two frameworks address different concerns.

What's the biggest barrier to AI adoption in treasury? According to Citi's practitioner research, data quality and accessibility (not model sophistication or compute cost) are the primary barriers to AI success in treasury. AI cannot outperform the data it runs on. The prerequisite for effective AI treasury management is a unified, validated, governed data layer across ERP systems, bank connectivity, and risk data sources.

Ver Tesorería en acción

Conéctese hoy mismo con expertos de apoyo, soluciones integrales y posibilidades sin explotar.

.png)

.png)

.png)

%404x.png)